| DAILY ISSUE The First AI Bottleneck Made Millionaires. The Next One Is Forming Now. VIEW IN BROWSER In November 2023 – just a year after ChatGPT’s debut – OpenAI CEO Sam Altman made a surprising decision He stopped taking new customers. New signups for ChatGPT’s paid subscriptions were suddenly suspended. Anyone hoping to access OpenAI’s most advanced models was simply turned away. This wasn’t because demand had collapsed. It was because demand had exploded. In its first year, ChatGPT reached 100 million weekly active users. And after its first developers conference on November 6, 2023 – where the company unveiled ChatGPT Plus – the number of wannabe subscribers surged rapidly. Just a week later, Altman literally had to start turning away folks with credit cards in hand. Altman summed up the moment with a simple emoticon: the frowny face.

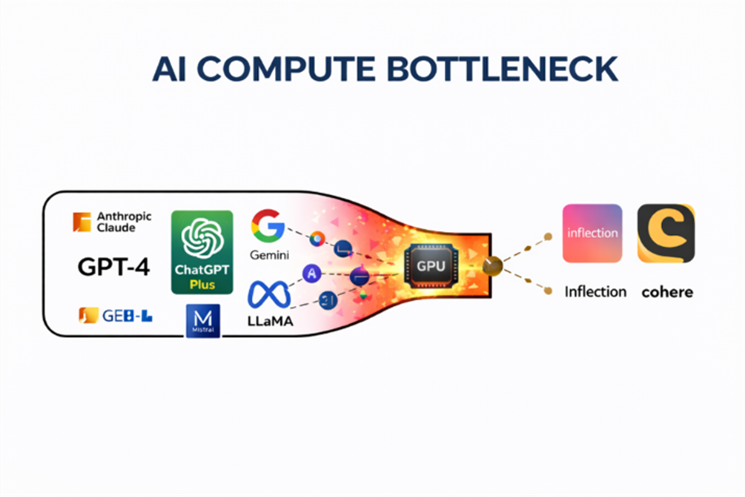

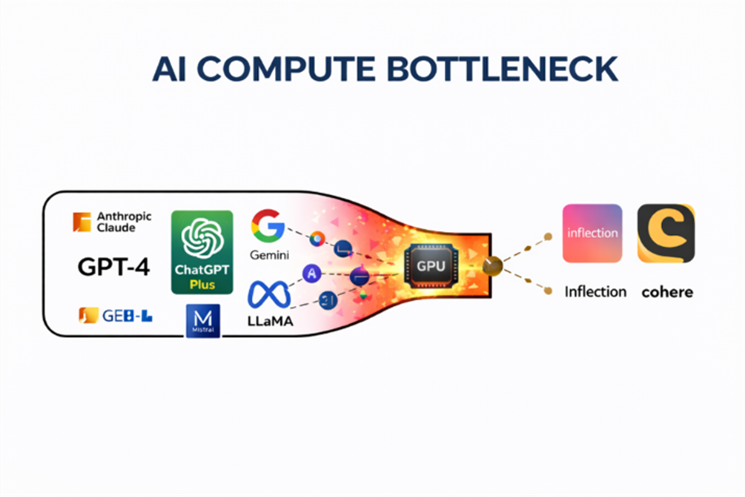

Typically, “quitting while ahead” is advantageous in debating, gambling, and even trading. But it’s not recommended in business. OpenAI, however, had no choice. Demand for ChatGPT had exceeded the company’s GPU capacity. One of the most advanced AI companies in the world had run out of “compute.” Other AI startups ran into the same issue: GPUs were effectively sold out. The AI models were ready, and consumer demand was there, but the hardware to run them at scale wasn’t. GPU supply chains were still running at pre-AI demand levels. It became the first great bottleneck of the AI era.

There weren’t enough chips, networking components, or infrastructure to power the AI explosion. In effect, the entire industry hit a wall. Two companies sat at the center of this compute bottleneck… And both of these AI infrastructure providers went on to capture enormous gains early in the AI boom. Today, let’s examine both of these companies – and how they made early investors millionaires. Then, I’ll reveal how it’s not too late for investors to jump in on AI’s next wave of millionaire-maker bottlenecks. We’ll get started with Nvidia Corp. (NVDA). It sounds hard to believe now, but for decades, Nvidia was a terrible stock… Turning a Compute Shortage Into a Gold Mine Jensen Huang founded Nvidia in the early 1990s to supply graphics processors to the videogame industry. But gaming GPUs were a cyclical business. Investors who bought near the peaks often saw losses of 70% or more during downturns. Ten thousand dollars invested in Nvidia in October 2018, for example, was worth just $4,400 less than three months later. While Nvidia gradually expanded into automotive and mobile chips, it wasn’t until generative AI arrived that the companyturned into a great stock. AI models require enormous computing power to train and operate. And once ChatGPT burst onto the scene, the companies racing to build AI systems suddenly needed vast quantities of high-performance GPUs. The problem was that almost no one could supply them. Nvidia’s chips — particularly the H100 — quickly became the gold standard for AI training. As demand surged, the company went from selling $2,000 graphics cards to gamers to selling $30,000 GPUs to data-center operators. At the peak of the shortage in 2023, some H100 cards were reportedly reselling for more than $40,000 on eBay. And as the AI boom accelerated, the company’s data center division exploded. Within a year, it became Nvidia’s largest business segment, with AI chips driving the vast majority of the growth. In effect, Nvidia turned the compute bottleneck into a business moat. Anyone building advanced AI models needed Nvidia’s GPUs. Today, more than two years later, nearly every major AI company still depends on them. Investors who recognized the compute bottleneck early were richly rewarded. Going back to the launch of ChatGPT as the start of the AI compute constraint, Nvidia shares have surged nearly 1,000%. That’s the power of getting positioned before a bottleneck breaks open. But GPUs were only part of the story’ The Networking Bottleneck Few Saw Coming Training and operating AI models requires far more than powerful GPUs. It also requires thousands of those GPUs to communicate with each other at extraordinary speeds inside massive data centers If data can’t move quickly enough between chips, the GPUs sit idle waiting for information. And idle GPUs are enormously expensive. This created another bottleneck: data center networking. That’s where Broadcom Inc. (AVGO) comes in. Broadcom specializes in the networking semiconductors that allow enormous clusters of GPUs to function as a single system. Its Tomahawk and Jericho chips help move data through the world’s fastest data centers while preventing congestion. As hyperscalers raced to build ever larger AI data centers, demand for these networking chips surged. Broadcom also began designing custom AI accelerator chips for companies such as Alphabet Inc. (GOOG) and Meta Platforms Inc. (META) — processors optimized for specific AI workloads. Together, these businesses positioned Broadcom directly in the path of the AI infrastructure boom. Nvidia solved the compute bottleneck. Broadcom helped connect that compute. Since the launch of ChatGPT, Broadcom shares have soared roughly 600%. And just like Nvidia, the company’s gains were driven by investors recognizing a bottleneck before the rest of Wall Street fully understood it. But the compute and networking constraints that fueled those gains are beginning to ease. The next phase of the AI boom will revolve around entirely different bottlenecks… AI’s Next Bottlenecks Could Be Even Bigger At the start of the AI boom, the biggest gains didn’t come from the companies building AI applications. They came from the companies supplying the infrastructure needed to run them. Investors who recognized that early understood something critical: AI demand wasn’t the issue. Compute supply was. Investors who identified that compute bottleneck early — and positioned before Wall Street fully priced it in — captured historic upside. But to harvest those gains, you have to leave the party before it ends. For example, I recommended shares of Advanced Micro Devices Inc. (AMD) to my Fry’s Investment Report subscribers in March 2025 as a play on the compute bottleneck. Just about six months later, in October, we booked 107% gains. We harvested those gains quickly because I saw the compute party was ending. That bottleneck was no longer driving AMD’s share-price growth. Since the end of October, AMD has dropped more than 20%. Over that same stretch, Nvidia has fallen about 15% and Broadcom is down roughly 10%. While the AI compute bottleneck hasn’t disappeared, its greatest gains are likely behind us. But AI development didn’t stop. That means more bottlenecks are already emerging. Just like the GPU shortage that forced Sam Altman to pause new ChatGPT subscriptions in 2023, these moments remind us that technological revolutions often run into real-world limits. In fact, I believe the market could begin recognizing these constraints as soon as April 24, when several of the largest AI hyperscalers report earnings. If those companies begin acknowledging the supply limits forming around metals, electricity, and memory, investors may suddenly realize the AI boom is running into physical limits. That’s why I’ll be discussing this idea in more detail during FutureProof 2026 on Wednesday, March 18 at 1 p.m. ET. During that free broadcast, I’ll explain why these infrastructure constraints could soon trigger a major shift in the AI trade — and why shortages in metals, electricity, and memory are becoming the next major bottlenecks. You can reserve your spot by going here. I’ll also share the names and tickers of 15 companies already beginning to benefit from these emerging bottlenecks. If history is any guide, the next Nvidia-style winner may come from the companies solving AI’s newest constraints. Sign up here. Regards, |

No comments:

Post a Comment